The problem of misinformation is not only about content, but about interpretation. In an environment where moderation cannot scale with the volume of information, an alternative emerges: design systems that don't filter information, but help users understand it. This approach led to the development of an LLM-based tool (e.g., using ChatGPT) integrated into a Chrome extension that analyzes text from a media-literacy perspective. Rather than declaring content true or false, the system introduces structure into critical reading, shifting part of the responsibility to the user while providing AI-assisted support.

The internet doesn’t suffer from a lack of access to information; it suffers from a lack of interpretation. Most current solutions focus on moderating content removing, blocking, or labeling but this approach has clear limits. Not all problematic content violates rules. Not all misinformation is automatically detectable. And central review cannot scale indefinitely. A more interesting question arises: what if the system didn’t try to decide for the user, but helped them think better?

From this idea we developed an experimental tool that uses LLMs to analyze texts in real time through the lens of media literacy. It doesn’t seek to replace human judgment; it seeks to structure it.

The current model of information consumption has three structural problems:

This creates a system where users consume information without sufficient tools to evaluate it critically.

Media literacy shouldn’t be an isolated educational process; it should be an ability integrated into the digital experience. LLMs make it possible to build systems that not only answer questions but structure information analysis in real time. This opens the door to moving from a moderation model to a cognitive-assistance model.

Trying to remove all problematic information is unfeasible. The real problem is that users don’t always have a clear framework to interpret what they read.

The problem is not what is published; it is how it is interpreted.

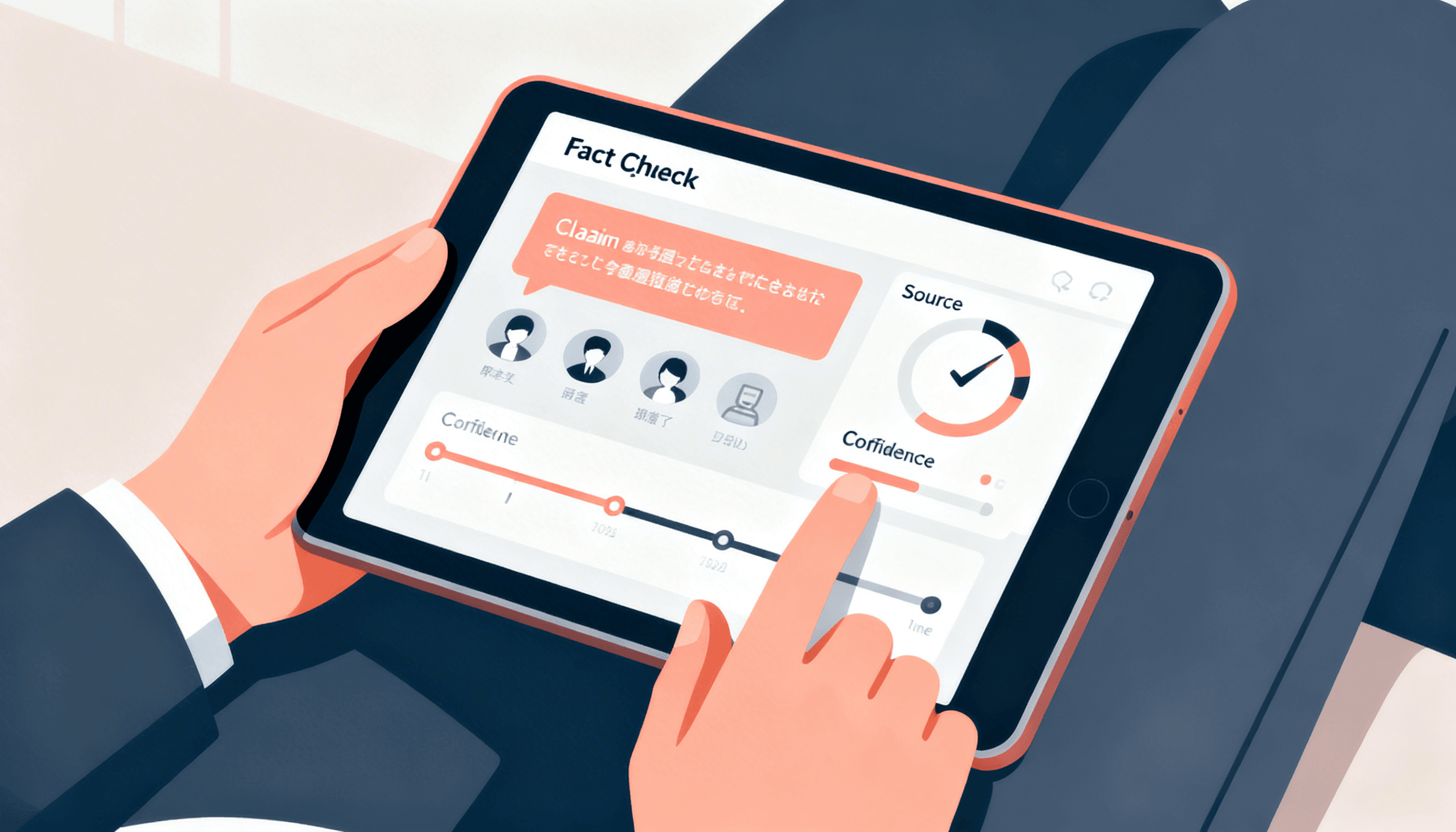

The use of models like ChatGPT was conceived not as a fact-checker but as an engine for structural analysis of language.

The system does not respond “this is false.” It responds “this should be analyzed more carefully.”

When the tool integrates at the moment of reading, literacy stops being theoretical and becomes practical.

Like any LLM-based system, this tool is neither neutral nor perfect. Its limits determine responsible use.

A system like this does not eliminate the problem, it redefines it.

Content moderation is necessary but insufficient. It cannot scale at the pace of information nor cover all its forms, real change lies in designing systems that amplify users’ critical capacity. This tool demonstrates that LLMs can be used not only to generate content but to structure how we analyze it. It also makes clear that responsibility does not disappear it is redistributed.

Scaling in the information age is not about controlling what is said; it is about improving how it is understood.